School approaches to filtering internet content

As social media,Youtube, and gaming become more educationally relevant, how do we leverage their educational potential while keeping student data safe and teaching them digital citizenship?

As social media,Youtube, and gaming become more educationally relevant, how do we leverage their educational potential while keeping student data safe and teaching them digital citizenship?

Lock it down! “We need to keep everyone safe.”

Open it up! “It’s how the real world operates.”

I’ve heard strong arguments for both sides of the coin and have seen successes and challenges in both cases.

For example, in one school the filtering was so widespread, the IT department spent a significant amount of time working though requests to unblock sites and teachers were frustrated because students were trying research and to class came to a standstill.

Along those lines, in another example, the devices issues by a school were so locked down, students just preferred and forgo the headache and used their phones. Conversely, even with the best firewalls and filtering, students can find aways around anything to access what they want.

How do we determine the appropriate balance?

In setting the context, there are two pieces of legislation we should look at to help understand the landscape:

Digital privacy acts that affect students

COPPA:

COPPA is a United States law that makes it illegal for commercial websites, plugins or ad networks to collect identifying information about kids under 13 without parental consent.

COPPA is a United States law that makes it illegal for commercial websites, plugins or ad networks to collect identifying information about kids under 13 without parental consent.

The law demands that information-collecting companies:

- Maintain stringent security measures to keep online personal data safe;

- Get verifiable parental consent before digitally collecting personal information from children under 13;

- Publish/post conspicuous, easy-to-understand privacy policies;

- Implement a system which notifies parents and guardians of policy and procedure changes;

- Provide a way for parents/guardians to opt-out or delete their child’s information at any time.

CIPA:

Schools and libraries subject to CIPA are required to adopt and implement an Internet safety policy addressing:

- Access by minors to inappropriate matter on the Internet;

- The safety and security of minors when using electronic mail, chat rooms and other forms of direct electronic communications;

- Unauthorized access, including so-called “hacking,” and other unlawful activities by minors online;

- Unauthorized disclosure, use, and dissemination of personal information regarding minors; and

- Measures restricting minors’ access to materials harmful to them.

What are some mechanisms for filtering?

Digging deeper into the question, I unequivocally support the spirit of of the acts above. But I’m more interested in looking at how schools filter things such a social media, gaming, IM/chat, and videos which, according to the American Association of School Librarians, are the top 4 most filtered categories.

As someone who travels to a lot of schools in Vermont, the variance is great in terms of access to things like Facebook and Youtube. Some schools provide no access to Facebook or Google+ and some allow it and are committed to teaching students how to interact in a digital world. Additionally, some school use Youtube as an anchor for material and some simply block it. It seems like in some cases pedagogy and filtering do not align, especially looking at the SAMR wheel.

Some approaches in action

Let’s look at two instances in which schools tackled portions of internet filtering: inappropriate content on YouTube and whole network access expectations.

The “YouTube Dilemma”

We recently worked with a school who would like to provide access to YouTube videos but find some of the comment content problematic. Strangely, they are not alone in wanting their students to focus on the videos rather than having their learning environment interrupted by unpleasant opinions and advertising. We found a number of tools that can help with this particular YouTube dilemma:

We recently worked with a school who would like to provide access to YouTube videos but find some of the comment content problematic. Strangely, they are not alone in wanting their students to focus on the videos rather than having their learning environment interrupted by unpleasant opinions and advertising. We found a number of tools that can help with this particular YouTube dilemma:

- Viewpure: a browser-based tool that lets you enter in a YouTube url and the software strips away surrounding advertising, comments — even the pesky 0:15 ads at the beginning. Try it with this takedown of Matt Damon’s life expectancy on Mars.

- This Chrome extension, Hide YouTube Comments, does what it says on the tin: it hides comments on YouTube videos.

- This Firefox plugin, No YouTube Comments, works similarly for the Firefox browser.

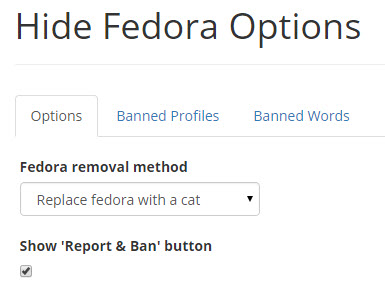

- This spectacular piece of coding, Hide Fedora, gives you the option of replacing negative comments with cats in both Chrome and Firefox.

Student network use agreements

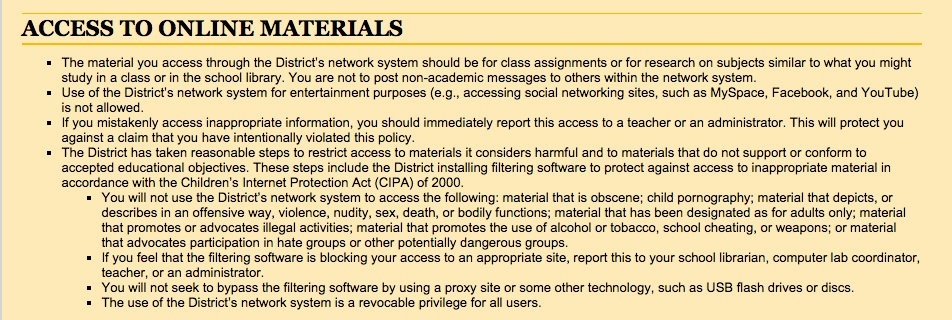

Cupertino High School, in Sunnyvale, California, filters some material at the router to comply with CIPA (“…the District has taken reasonable steps to restrict access to materials it considers harmful”), but also spells out explicit expectations for student use of the network as part of their network use agreement for students:

That’s one small part of the agreement. It’s pretty long and very specific, but making sure everyone knows where the boundaries are — all the boundaries — is a great middle ground between locking down everything in Creation and expecting that students will always know the correct course of action for dealing with any and every online resource.

How to determine the right balance?

Schools need to engage in conversations with students, educators (tech folks included), and parents to understand what is out there and how it can be used to help learning. In the same conversation, it’s appropriate to mention the concerns as well and together develop a vision, that might need to be revisited often, as things change so quickly.

A few thoughts that might be helpful.:

- There cannot be enough emphasis placed on digital citizenship;

- Policy and practice need to have a close alignment;

- There should be a thoughtful union of technological and pedagogical decision makers;

- Be transparent with our communities;

- Have a clear process for examine new tools.

To lock or not to lock: how schools approach internet filtering https://t.co/3IaLT2yhgM https://t.co/vdrjGsxK39

RT @innovativeEd: To lock or not to lock: how schools approach internet filtering https://t.co/3IaLT2yhgM https://t.co/vdrjGsxK39

Awesome well researched piece by @scottthompsonvt — To lock or not to lock https://t.co/EUJWo649Yh via @innovativeEd